Silicon Valley’s Year in Hate

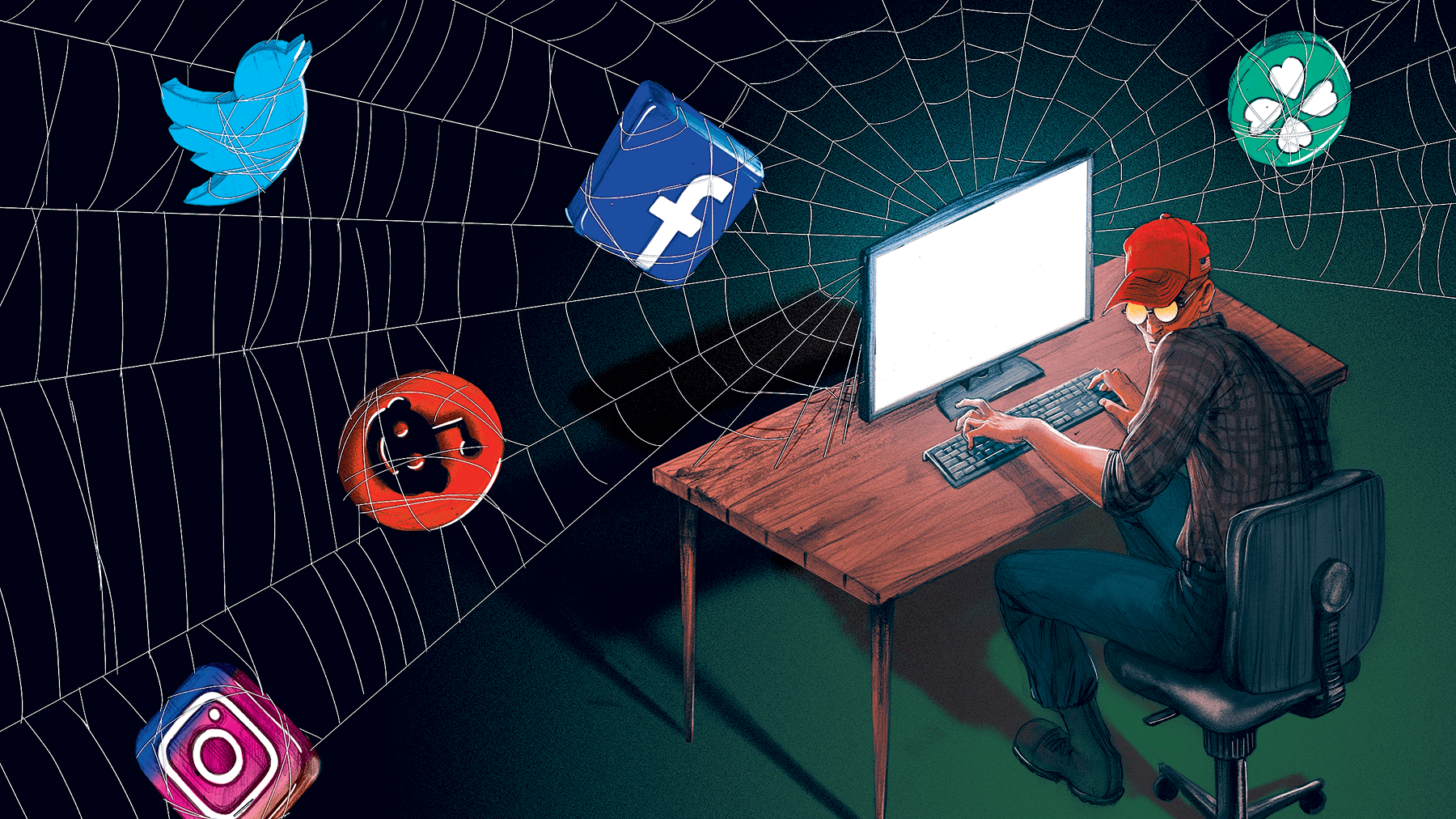

On Oct. 26, 2018, authorities arrested 56-year-old Cesar Sayoc in South Florida after he mailed 14 crudely constructed pipe bombs to outspoken critics of President Trump over the span of five days.

Before mailing the bombs, Sayoc trafficked in hate and conspiracy online.

His social media accounts were dedicated to extreme right-wing conspiracy theories attacking prominent liberals, such as philanthropist George Soros and Bill and Hillary Clinton. He also regularly circulated conspiratorial content about undocumented immigrants and Islamic terrorism, and he reportedly told a former co-worker, “Everybody that wasn’t white and wasn’t a white supremacist didn’t belong in the world.”

One subject of Sayoc’s harassment, Rochelle Ritchie, a regular Fox News panelist, reported one of Sayoc’s threats to Twitter’s Trust and Safety team.

“So you like make threats,” Sayoc wrote to Ritchie from his account @hardrock2016. “We Unconquered Seminole Tribe will answer your threats. We have nice silent Air boat ride for u here on our land Everglades Swamp. We will see you 4 sure. Hug your loved ones real close every time you leave you home.”

Twitter responded on Oct. 11, 2018, informing Ritchie that Sayoc’s threat did not violate the company’s policies on abusive behavior. Eleven days later, pipe bombs began arriving at the homes of Sayoc’s enemies.

It took five days and Sayoc’s arrest on charges including “illegal mailing of explosives, threats against former presidents and others and assaulting current and former federal officers” for Twitter to acknowledge that it had made an error. Only then did the company delete Sayoc’s account.

This episode is merely a single, recent example highlighting Silicon Valley’s inability — and unwillingness — to address rampant abuse of social media platforms by extremists and the increasingly violent consequences.

There is a disconnect between the point of view of tech company leaders and the toxicity the users on their platforms have to endure. It was on plain display in July 2018 when Mark Zuckerberg, CEO of Facebook, told Recode that those who deny the Holocaust weren’t “intentionally getting it wrong.” This stunning moment of myopia received swift criticism.

Deborah Lipstadt, the Dorot Professor of Holocaust Studies at Emory University and a world-renowned expert on denialism, called Zuckerberg’s comments “breathtakingly irresponsible.” The German Justice Ministry rebuked the CEO, reminding the company that Holocaust denial is punishable by law in Germany and that Facebook is presently liable for such content.

Zuckerberg’s remarks on Holocaust denial — which he later clarified, stating, “I personally find Holocaust denial deeply offensive, and I absolutely didn’t intend to defend the intent of people who deny that” — came 77 days after the company consented to an independent civil rights audit to assess its impact on underrepresented communities and communities of color.

Showing its total misunderstanding of the gravity of the situation, Facebook announced its civil rights audit at the same time it announced a separate audit into whether the company censors conservative voices, to be led by Republican Sen. Jon Kyl of Arizona. That Facebook would make an equivalency between civil rights and possible censoring of conservatives, and then choose Sen. Kyl, who has a history of appearing with anti-Muslim extremists, was an astounding move.

Highly motivated hate groups are metastasizing on the internet and social media despite warnings from civil rights organizations, victims of harassment and bigotry online, and those involved in increasingly deadly tragedies. Tech companies do not fully understand hate groups and how they affect users. And their actions remain insufficient.

How Hate Groups Really Use Social Media

Tech companies fail to take seriously the hate brewing on their platforms, waiting until hate-inspired violence claims its next victim before taking meaningful enforcement action.

This was the case after Dylann Roof murdered nine black parishioners in 2015 and after the riot that left one dead and nearly 20 injured in Charlottesville, Virginia, at the “Unite the Right” rally in 2017.

Energized by the racist “alt-right” and emboldened by the Trump administration, the rally was the largest public demonstration of white supremacists in a generation. The rally was organized on Facebook, as was, for a period of time, its sequel in August 2018.

Despite several crackdowns, tech companies struggle to understand and address how hate groups use online space to organize, propagandize and grow.

Most of the white nationalist and neo-Nazi groups operating today are no longer traditionally structured organizations led by known figures.

Instead, hate groups are now mainly loosely organized local chapters formed around propaganda hubs, operating almost entirely online. These local-level chapters — such as the neo-Nazi Daily Stormer’s “Book Clubs,” the white nationalist The Right Stuff’s “Pool Parties,” or even the violent neo-Nazi Atomwaffen Division’s regional cells — imbibe propaganda and trade in violent, hate-laden content and rhetoric meant to dehumanize their targets and desensitize online consumers.

The increase in white nationalist and neo-Nazi hate group chapters in 2018, as well as the uptick in their propaganda campaigns and violence, shows that these digitally savvy groups are flourishing in spite of Silicon Valley’s promises to police them.

Another Year of Inconsistent Enforcement

As user growth accelerated on social media platforms, so, too, did the presence of hate groups and their leaders, finally able to transcend geographic boundaries and enjoy unprecedented audiences for their carefully tuned propaganda.

While official groups and pages for hate organizations and some of their leaders have been deplatformed, tech companies have allowed countless unofficial groups, led by the very same figures and populated by the same devotees, to prosper despite repeated warnings.

For example, when the Southern Poverty Law Center presented Facebook with examples of outwardly violent images and rhetoric targeting vulnerable communities — such as Muslims and immigrants — produced by both anonymous and known leaders in the hate movement, the company largely declined to take action.

The consequences of inaction were on full display when Facebook declined to ban the Proud Boys — a hate group most notable for its history as an early waypoint for extremists who later join overtly white supremacist organizations — in August. The company allowed the group to continue operating recruitment and new member vetting pages on the platform even after the Proud Boys led a violent protest in Portland last June that police ultimately declared a riot. When provided documentation of the Proud Boys’ use of the platform, Facebook ruled that the group did not violate their community standards, which include provisions against “potential real-world harm that may be related to content on Facebook.” Despite open glorification of violence, as well as a multitude of videos documenting beatings by Proud Boys, Facebook allowed the group to remain on the site.

Two months later, nine members of the Proud Boys were sought by authorities in New York on charges of rioting, assault and attempted assault after a brawl outside the Metropolitan Republican Club, where the group’s leader at the time, Gavin McInnes, was speaking.

Twitter opted to suspend McInnes’ account a week after the New York incident under a policy “prohibiting violent extremist groups,” the precise policy that Facebook did not find sufficient evidence to enforce after the Portland riot.

In August, Jack Dorsey, CEO and co-founder of Twitter, announced that the company would not ban the account of far-right conspiracy theorist Alex Jones, despite action being taken against Jones by other major companies including Apple, Spotify, Facebook and YouTube, because Jones hadn’t violated the platform’s rules. While defending his decision, Dorsey acknowledged Twitter’s shortcomings in communicating its policies and declared, “We’re going to hold Jones to the same standard we hold to every account, not taking one-off actions to make us feel good in the short term, and adding fuel to new conspiracy theories.”

The next day, Dorsey called into right-wing media personality Sean Hannity’s radio show to assure listeners that Twitter wasn’t censoring conservative voices.

One month later, Twitter banned Jones for violating its policies on abusive behavior after Jones harassed a CNN journalist outside of a congressional hearing at which Dorsey was testifying.

This lack of consistency across platforms is glaring. Social media companies are typically the first to point out that each platform is unique and involves different sets of considerations when assessing policy violations. But this keeps the door open for hate groups to slide back onto platforms or avoid enforcement altogether.

Jones and Infowars still have a presence on Facebook, including a personal page, a public page for streaming content and a closed group with more than 100,000 members. While Facebook banned several of his most prominent pages, Jones was allowed to continue peddling his conspiracies on the site. And while Twitter banned Jones, as well as the Proud Boys and McInnes, former Ku Klux Klan leader David Duke and white nationalist Richard Spencer inexplicably remain on the platform. Given that both Duke and Spencer have received international travel bans from European countries with legal protections around inciting hatred, it’s clear that enforcement is inconsistent.

Similar scenes have repeatedly played out on a number of platforms this year.

Discord is a chat platform designed for video game communities and a persistent and indispensable tool for white supremacist organizing. In early October, a journalist from Slate presented the company with a list of servers designed for denigrating minorities, indoctrinating individuals into white supremacy and doxing perceived enemies. Discord declined to take meaningful action or comment on its decision. Its terms of service state, “[Discord] has no obligation to monitor these communication channels but it may do so in connection with providing the Service.”

An even stranger scenario played out when a support specialist at Steam, a video game distribution platform, rescinded a disciplinary action taken against Andrew Auernheimer, a well-known and violence-minded white supremacist affiliated with the neo-Nazi Daily Stormer. Auernheimer, who was openly displaying two lightning bolts in his username, a reference to the Schutzstaffel of Nazi Germany, successfully lobbied for his sanction to be reversed over a flimsy claim that the symbols were meant as support for Vice President Mike Pence.

Auernheimer’s victory immediately became propaganda for the Daily Stormer.

Meanwhile, platforms such as Stripe continue to process monthly subscription payments for organizations like The Right Stuff, one of the white supremacist movement’s most popular and effective propaganda hubs specializing in radio programming. PayPal has taken a more aggressive line, but continues to struggle to keep up with the agility of cash-starved extremists who rapidly establish and publicize new accounts to continue receiving financial support.

YouTube had problems this year after a CNN investigation found that the company was running ads for more than 300 major brands — including Under Armour, Hilton, Adidas and Hershey’s — on channels peddling extremism.

It’s a Public Health Problem

In an interview with the Intelligence Report, political scientist P.W. Singer compared the colonization of conspiracy and extremist content on social media platforms to a public health issue that requires a “whole-of-society effort” to address.

But tech companies must do a lot more. Singer described the need for “creating firebreaks to misinformation and spreads of attacks that target their customers” as well as “‘deplatforming’ proven ‘superspreaders’ of harassment.”

“Superspreaders are another parallel to public health, that in the spread of both disease and hate or disinformation, a small number of people have a massive impact,” Singer said. “Sadly, many of these toxic superspreaders are not just still active but rewarded with everything from more followers to media quotes to actual invitations to the White House.”

The Network Contagion Research Institute (NCRI) also embraces approaching online hate as a public health crisis. Organized last May, the group conducted a large-scale analysis of 4chan and Gab data and examined how extremist online communities succeeded in spreading hateful ideas and conspiracies to larger, mainstream platforms like Twitter and Reddit.

Like Singer, NCRI director Joel Finkelstein told The Washington Post, “You can’t fight the disease if you don’t know what it’s made of and how it spreads.”

Their research shows spikes in the use of antisemitic and racist terms around President Donald Trump’s inauguration in 2017 and then later that year around the violence at the “Unite the Right” rally in Charlottesville, Virginia. The NCRI report describes a “worrying trend of real-world action mirroring online rhetoric.”

Gab, which was founded as an alternative to Twitter and has become an accepting home base for much of the alt-right, responded to the study in a now-deleted tweet saying, “hate is a normal part of the human experience. It is a strong dislike of another. Humans are tribal. This is natural. Get over it.”

On Saturday, Oct. 27, a 46-year-old white man, Robert Bowers, walked into a Pittsburgh synagogue armed with semiautomatic weapons. He has been charged with the murders of 11 congregants. He was an active Gab user, and his posts showed a deep engagement with white nationalist ideas and antisemitic conspiracy theories — including those surrounding Soros that led Sayoc to mail the philanthropist a pipe bomb in late October.

Bowers is far from the first killer to have left a trail of online radicalization on platforms large and small. The reactive pattern of tech companies arranging policy after real-world violence only to allow enforcement to lag as time passes remains woefully inadequate.

Tech companies need to understand and take measures that address the proliferation of hate online, both as a public health issue and as a societal emergency.